Infrastructure Model: Marketplace vs. Dedicated Servers

When evaluating the best alternatives to Vast.ai, the most important question isn't price — it's who controls the hardware. GPU Mart's dedicated GPU hosting model vs. Vast.ai's peer-to-peer marketplace creates fundamentally different outcomes across performance, reliability, support, and total cost.

Peer-to-Peer GPU Marketplace

Vast.ai connects buyers to independent GPU providers — it does not own any hardware. Think of it as an Airbnb for GPUs.

- 📅 Founded 2018 · 123,000+ users · 17,000+ GPUs globally

- ⚠️ No owned data centers — commission-based model

- ⚠️ Each instance is effectively a different environment

- ⚠️ Inconsistent hardware quality across providers

- ⚠️ Variable network performance, no central control

Dedicated GPU Infrastructure

GPU Mart owns and operates all hardware — GPU VPS and GPU bare metal dedicated servers — backed by 20+ years of hosting expertise.

- ✓ 3,000+ self-owned GPU cards · 50+ employees

- ✓ No third-party providers — full hardware control

- ✓ Consistent hardware standards across all servers

- ✓ U.S.-based Tier I data center (Dallas)

- ✓ Predictable, enterprise-level performance

Instance Architecture & Resource Allocation

Shared Resource Pool

- ⚠️ GPU instances share CPU/RAM with other users

- ⚠️ Each provider sets their own limits and pricing

- ⚠️ Host load must be evaluated manually before deploying

- ✗ Local volumes only — no persistent managed storage

- ✗ Destroy instance = data deleted, recovery not guaranteed

Fully Isolated Resources

- ✓ Dedicated GPU allocation — zero resource sharing

- ✓ Isolated CPU & RAM per server

- ✓ Persistent SSD/NVMe storage included in plan

- ✓ No performance contention from neighbours

- ✓ Full root + IPMI control

Performance & Stability: Real-World Impact

GPU model specs on paper tell you very little about what you'll actually experience in production. Here's how the two platforms compare on the metrics that matter for sustained AI workloads.

Variable Performance

Even filtering by GPU model, region, and CUDA version, actual throughput depends on the host's shared resources and network conditions.

- ⚠️ Bandwidth: 500 Mbps → 10 Gbps (shared, varies by provider)

- ✗ Inconsistent training speed across providers

- ✗ Occasional crashes and unpredictable latency

- ✗ Same GPU ≠ same performance

- ⚠️ Especially problematic for LLM inference & Stable Diffusion

Consistent Performance

Dedicated hardware eliminates variability at the source — your server performs the same at 3 AM as it does at peak hour.

- ✓ 100 Mbps – 1 Gbps unmetered bandwidth

- ✓ Stable training throughput, run to run

- ✓ Consistent inference latency for production APIs

- ✓ 99.9% uptime SLA

- ✓ No bandwidth overage charges

Pricing Comparison: Cheap vs. Predictably Affordable

Vast.ai pricing looks attractive at first glance — but the real number only emerges after adding bandwidth charges, storage fees, paused-instance billing, and job-failure overhead. This section puts GPU Mart GPU hosting side-by-side with Vast.ai pricing so you can see the true monthly cost. View full GPU Mart pricing →

Hourly billing + multiple add-on costs

- ⚠️ GPU rental + disk + bandwidth + provider-specific fees

- ⚠️ Paused instance: $0.14 – $0.43/day (still billed)

- ⚠️ Bandwidth: $0.004/hr/TB (1G) · $4–$20/TB (10G)

- ⚠️ Storage: $0.006 – $0.02/hr, varies by provider

- ✓ Discounts: 1 mo 20% · 3 mo 30% · 6 mo 40%

- ✓ Prepaid credit system, flexible top-up

Fixed monthly pricing, zero hidden fees

- ✓ All-inclusive: CPU, RAM, SSD, dedicated IP, bandwidth

- ✓ No bandwidth traffic charges — ever

- ✓ No surprise add-on fees

- ✓ Credit card & PayPal accepted

- ✓ Discounts: 3 mo 10% · 12 mo 20%

- ✓ Promotional deals up to 55% OFF — view current GPU Mart deals

Head-to-Head GPU Pricing Breakdown

GPU Mart's all-inclusive pricing — with substantially more RAM, CPU cores, and storage — frequently wins on total value per dollar.

| GPU | Platform | Included Config | Monthly Price | Result |

|---|---|---|---|---|

| RTX Pro 6000 | Vast.ai | 16 GB disk · CPU/RAM shared & variable | $765 – $962/mo | — |

| GPU Mart | 90 GB RAM · 32 CPU cores · 400 GB SSD · 1 Gbps unmetered | $599/mo | GPU Mart 22–40% cheaper | |

| RTX 5060 | Vast.ai | 16 GB disk · CPU/RAM shared & variable | $125/mo | — |

| GPU Mart | 28 GB RAM · 16 CPU cores · 240 GB SSD · 200 Mbps unmetered | $99/mo | GPU Mart 20% cheaper | |

| RTX 5090 | Vast.ai | 16 GB disk · CPU/RAM shared & variable | $268 – $435/mo | — |

| GPU Mart | 90 GB RAM · 32 CPU cores · 400 GB SSD · 500 Mbps unmetered | $449/mo (less after discount) | Competitive |

Platform UX & Deployment Experience

Powerful but complex

Advanced GPU filtering and flexible Docker-based templates, but the learning curve is real — especially when things go wrong.

- ✓ Advanced GPU instance filtering

- ✓ 38+ Docker image templates

- ✓ Jupyter, SSH, CLI support

- ✗ Complex UI — steep learning curve for beginners

- ✗ Instance failures are a "black box"

- ✗ Manual SSH key setup required

Guided setup, ready to run

Managed infrastructure with free pre-installation of 20+ AI frameworks — spend time on your model, not your environment.

- ✓ Simpler, guided deployment process

- ✓ Free pre-install: ComfyUI, DeepSeek, Ollama, LLaMA 3.1 & more

- ✓ Full root + IPMI access

- ✓ GPU & hardware monitoring assistance

- ✓ Supports all legal applications

- ✓ Expert support response within 2 minutes

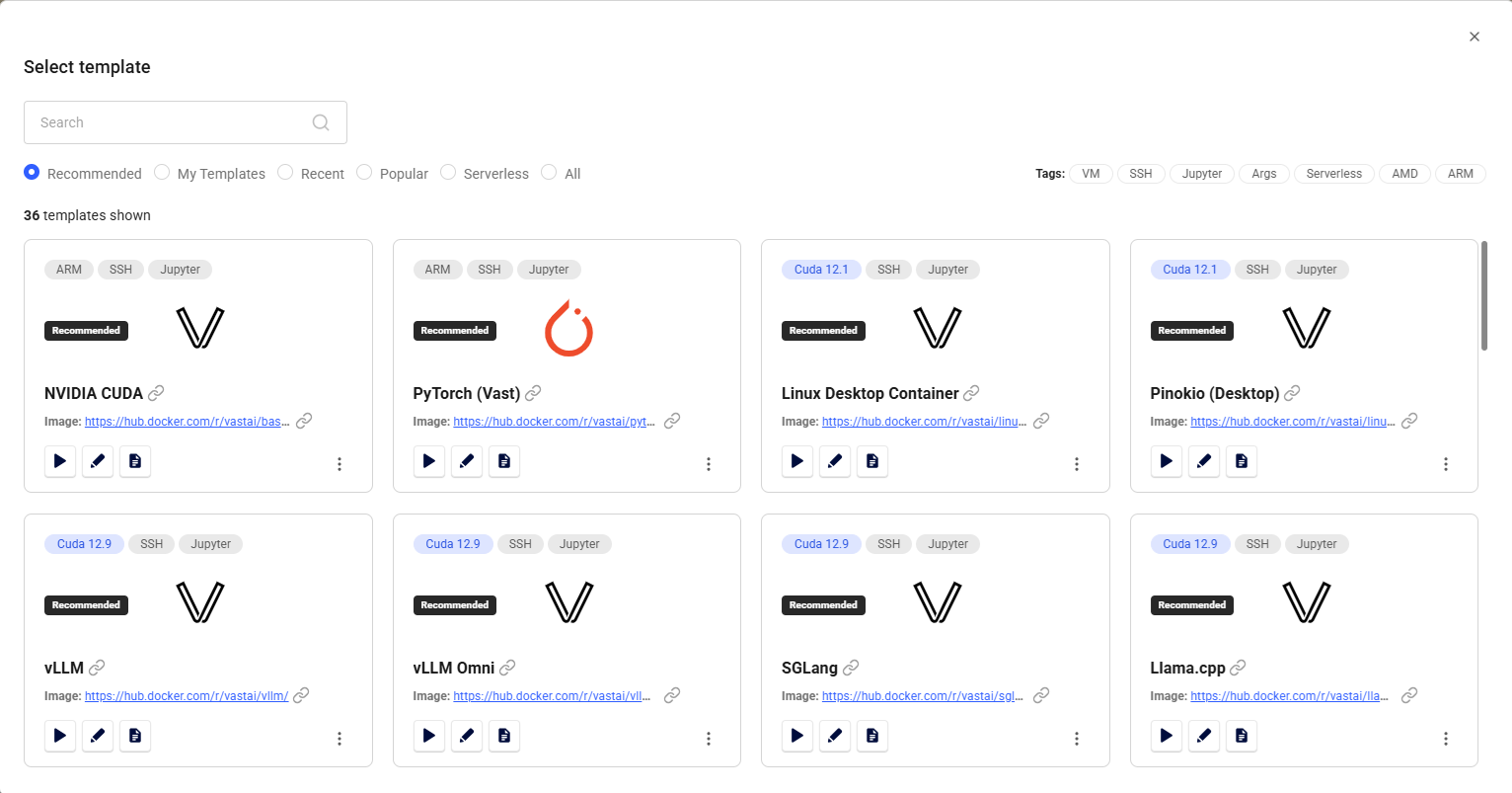

Vast.ai Interface

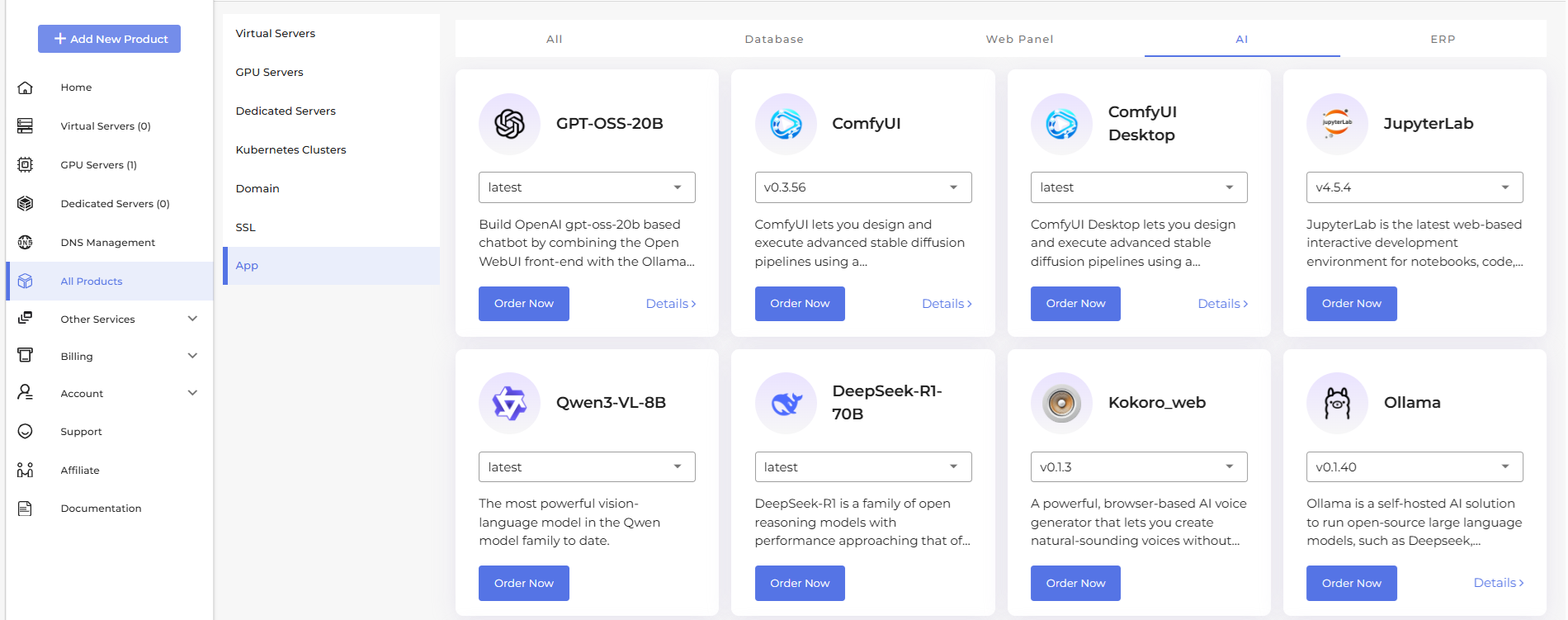

GPU Mart Control Panel — Pre-installed AI Software

Support & Operations

- 💬 Live chat available

- ⏱️ Response time: 3–10 minutes

- ⚠️ Hardware issues escalate to distributed providers

- ⚠️ No centralized hardware team — accountability is split

- ✓ Dedicated GPU experts on staff

- ✓ Response within 2 minutes

- ✓ Hardware replacement within 4 hours

- ✓ Proactive server health monitoring

- ✓ Deployment assistance included

Which Platform Fits Your Use Case?

Experimentation & research

- 🧪 Prototyping and quick experiments

- 🎓 Academic research & coursework

- 💸 Extremely cost-sensitive, short-term jobs

- 🔬 Workloads where interruptions are tolerable

Production AI systems

- ✓ Production AI systems & customer-facing APIs

- ✓ LLM hosting (DeepSeek, LLaMA, Ollama)

- ✓ Stable Diffusion & ComfyUI services

- ✓ Long-running training jobs

- ✓ Teams requiring compliance & SLA guarantees

Infrastructure Reliability, Compliance & SLA

For AI workloads beyond experimentation, infrastructure trust becomes a deciding factor — especially for teams serving customers or operating under regulatory requirements.

U.S. Tier I Data Center

Dallas-based facility with SOC compliance support, ISO 27001 (Information Security), and ISO 27701 (Privacy Management).

99.9% Uptime SLA

Clear operational guarantees backed by hardware replacement within 4 hours — not vague promises.

Dedicated U.S. IP

Clean IP reputation not shared across unknown tenants — critical for AI APIs and self-hosted LLM deployments.

ISO 27001 & 27701

Information Security & Privacy Management certifications — essential for enterprise and regulated business deployments.

Ready to Run AI Workloads Without Interruptions?

25+ GPU models · Dedicated resources · Full root + IPMI access · Expert GPU support in under 2 minutes

Frequently Asked Questions

GPU Mart Review vs Vast.ai: The Bottom Line

The real question isn't which platform is cheaper — it's whether your workload can tolerate uncertainty. Based on this GPU Mart review, both platforms serve legitimate needs, but for fundamentally different users. If you're looking for the best alternatives to Vast.ai for production deployments, the answer is clear.

Flexible · Low-cost · Experimental

Best when Vast.ai pricing is the primary constraint and occasional interruptions are tolerable. Ideal for students, researchers, and developers exploring ideas who don't yet need production-grade SLA guarantees.

Stable · Dedicated · Production-Ready

The strongest Vast.ai alternative for teams running real AI systems — LLM hosting, Stable Diffusion services, long-running training, and customer-facing APIs. GPU Mart GPU hosting delivers performance consistency, SLA accountability, and cost predictability that marketplace platforms structurally cannot match.